- AI is showing up everywhere in the GTM stack, but most of it is built for marketers and salespeople — not for the ops professionals who actually run the systems.

- Marketing ops lives between people and systems. The job is reactive, logic-heavy, and documentation-starved and AI is only beginning to touch it.

- Where AI helps today: prospect research and enrichment (Clay), enablement and training (ChatGPT), and documentation. Where it doesn't: dirty data processing, audience logic building, and the judgment calls that keep systems honest.

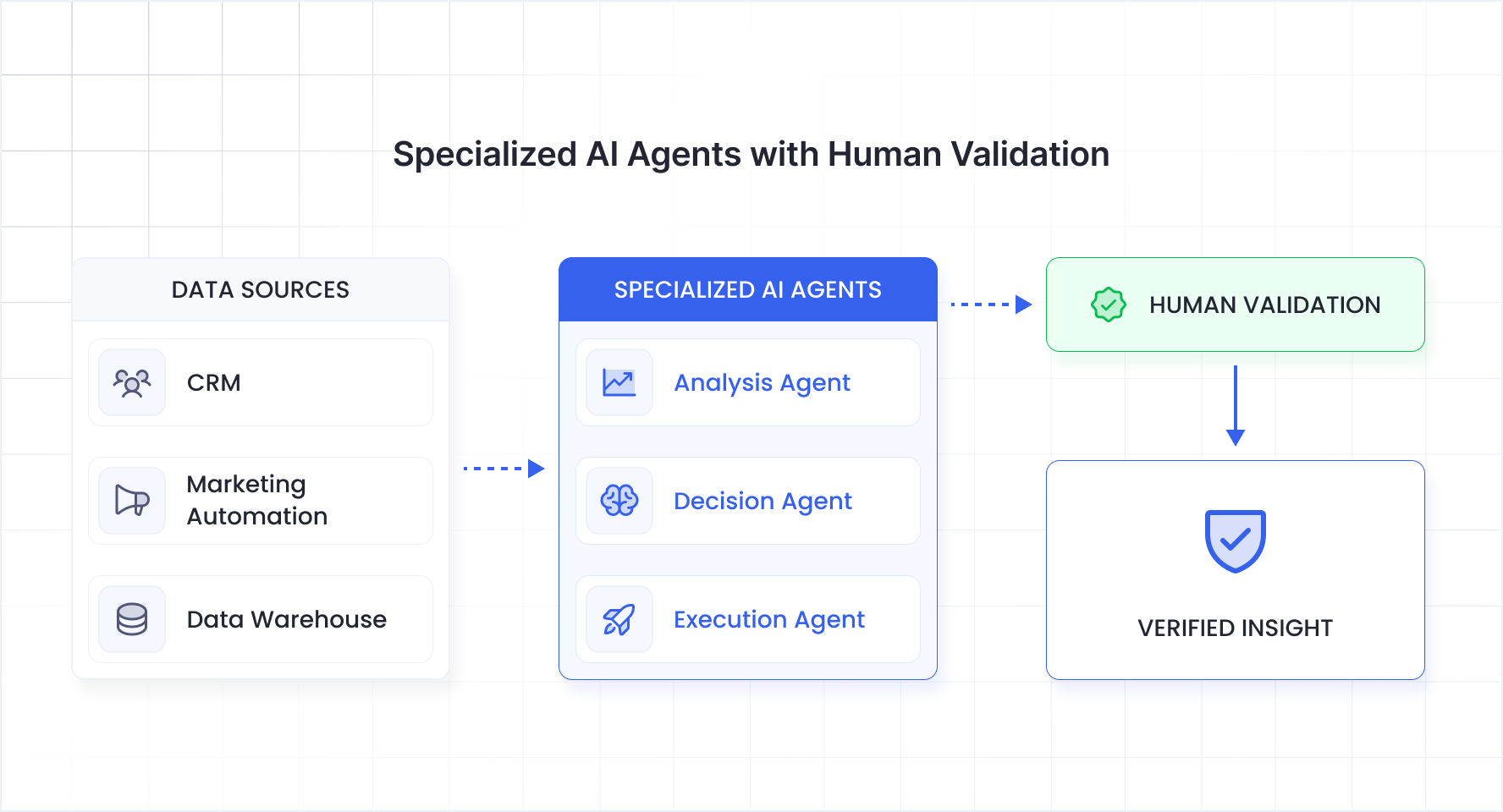

- The biggest guardrail isn't a feature, it's architecture. Specialized agents with human-in-the-loop review beat a single AI trying to do everything.

- If you don't validate outputs, the system trains itself that mistakes are correct and the errors compound silently.

Watch the full conversation below.

Grace Harbison describes herself as a systems thinker with a bias for clarity, clean data, and execution that actually sticks. She's spent over a decade in marketing and revenue operations, helping fast-moving SaaS organizations turn strategy into motion. She's led full martech overhauls, rebuilt lifecycle frameworks, cleaned up messy attribution models, and designed lead scoring and routing systems that hold up under pressure.

She started in 2013 as a sales and marketing admin at an organization where a leader saw something in her that she didn't yet see in herself — an aptitude for systems — and challenged her to learn Salesforce and Excel.

Her first Marketo implementation was back when the logo was still purple. Since then, she's held senior marketing ops and revenue ops roles at CircleCI, SecurityPal, Optimizely, and CloudBees, working across Dynamics, Pardot, Salesforce Marketing Cloud, and HubSpot along the way.

She recently joined Paminga, a marketing automation platform built specifically for marketing ops professionals, as Head of Marketing & MOps Advocacy, after first encountering the product as a practitioner evaluating it during a vendor audit.

What makes Grace's perspective worth listening to is not just her depth across systems, but her clarity about what the job actually is. Marketing ops, she says, didn't exist as a dedicated career fifteen years ago. The platforms came first — and the people who manage them had to invent the role around what the technology demanded.

"The people have evolved," she says. "The technology hasn't."

Marketing and Marketing Ops: Two Legs on the Same Body (But Very Different Jobs)

When AI conversations come up in marketing teams, they tend to focus on the creative side. Stuff like brainstorming campaigns, generating copy, iterating on messaging. Grace sees a clear split that most people overlook.

She describes marketing and marketing ops as two legs on the same body.

Marketing is the visionary side; the artists and strategists. Marketing ops is the architecture side; the people who make the vision actually run. The tools that AI has unlocked for marketers such as content generation, brainstorming, creative iteration are genuinely useful. But they're solving a different person's problem.

"In terms of how to help marketing ops," Grace explains, "it's about what tasks we're doing on a daily or weekly basis that we could automate, or at least have something that can answer the questions because a lot of ops is answering questions."

This is the distinction most AI vendors miss. The marketing ops professional isn't trying to write better copy. They're trying to build an audience list using boolean logic across three systems, answer a stakeholder's one-off analytics question without derailing their afternoon, and document the workflow they just built before someone asks them to build the next one. AI has barely begun to touch that world.

Where AI Actually Helps: Three MarkOps Use Cases That Work Today

Ask Grace where AI has genuinely made her work better, and she groups it into three categories, each one grounded in something she's actually done, not something a vendor promised.

1. Analytics and Ad-Hoc Reporting

The first and biggest category is answering analytical questions. Not building dashboards, those are their own workstream. She's talking about the one-off report requests that show up in Slack on a Tuesday morning from a marketing leader or a BDR manager who needs to know how a campaign is performing right now.

"I am typically the person that pulls that report," Grace says. "Then when you pull a report, pulling a report often pulls a thread. They have questions about what the data means, what the definitions are. If you don't have that well documented, the original question sprawls into this huge conversation."

An AI system that could field those questions by working from established definitions and the actual data would reclaim significant hours every week.

2. Audience Building with Natural Language

Marketing ops professionals think in logic: boolean operators, filter combinations, inclusion and exclusion criteria across multiple systems. Grace's second wish is a tool where she could describe an audience in plain language and have the system architect the query based on definitions she's already provided.

3. Documentation

The third category is the one most ops professionals will recognize immediately. Documentation takes enormous amounts of time, and teams that are constantly in reactive mode never find the time to do it even though everyone knows it would relieve future pressure.

Grace has leaned into AI heavily here, using it to help structure, draft, and polish internal documentation that would otherwise sit in someone's head or get captured in a Slack thread that nobody can find six months later.

AI is Limitless (And Why That's Bad)

Grace keeps coming back to a tension that defines how she evaluates AI tools: the difference between capability and clarity.

Clay, for example, is a tool she uses regularly and considers an uncuttable budget item. She likes what it can do: person-level research, company-level research, personalized outbound copy, ICP identification. But she's blunt about its limitations for an ops professional.

"The sky's the limit," she says. "So it's very hard to look at Clay and understand exactly where you want to start. I love a tool with a clear use case."

This is a pattern she sees across horizontal AI platforms. They're powerful, but they require the user to arrive with a fully formed vision of what they want to build. For a marketing ops professional who's already juggling a dozen systems and fifty Slack messages a day, that's a significant barrier.

The tools that work best, in her experience, are the ones that show up with a clear use case already defined where the value is obvious before you start configuring.

The Guardrail Framework: Manufacturing Floors and Multi-Agent Architecture

When the conversation turns to the future — a world where AI agents handle more of the orchestration, where systems analyze data and act on recommendations with minimal human intervention — Grace doesn't dismiss it. But she has a clear framework for what has to be true before she'd trust it.

She reaches for a manufacturing analogy. A plant produces one product at the end, but it has a different station intentionally designed for each aspect of that product. She looks at AI the same way.

"I don't want one AI agent to rule them all," she says, "because as soon as something goes haywire, that's where I'm really going to struggle to figure out what went haywire."

Her preferred architecture: multiple specialized agents, each responsible for a discrete function, with a human in the loop who can inspect the output at each station. When something breaks, you can trace it back to the specific agent that made the bad call, rather than debugging a monolithic system.

This isn't just a technical preference; it's an operational philosophy. The same instinct that makes a good ops person insist on documented definitions and clean handoffs between systems applies directly to how AI should be structured.

The three guardrails Grace advocates for:

First, human-in-the-loop validation — configurable, so you can slowly expand the automation boundary as you build confidence. Where the system has proven itself reliable, let it run. Where it hasn't, keep a human checking.

Second, multi-agent architecture over monolithic AI. Each agent operates independently, which means you can do root cause analysis when something goes wrong not just on errors, but on judgment calls. If an agent made a decision, you need to understand what data it used and why.

Third, explainability at the judgment level. It's not enough to know that an agent produced the wrong output. You need to understand the reasoning path that led there especially because agents that don't get corrected will learn from their own mistakes and compound them.

The Expensive Manual Problem Nobody's Solved Yet

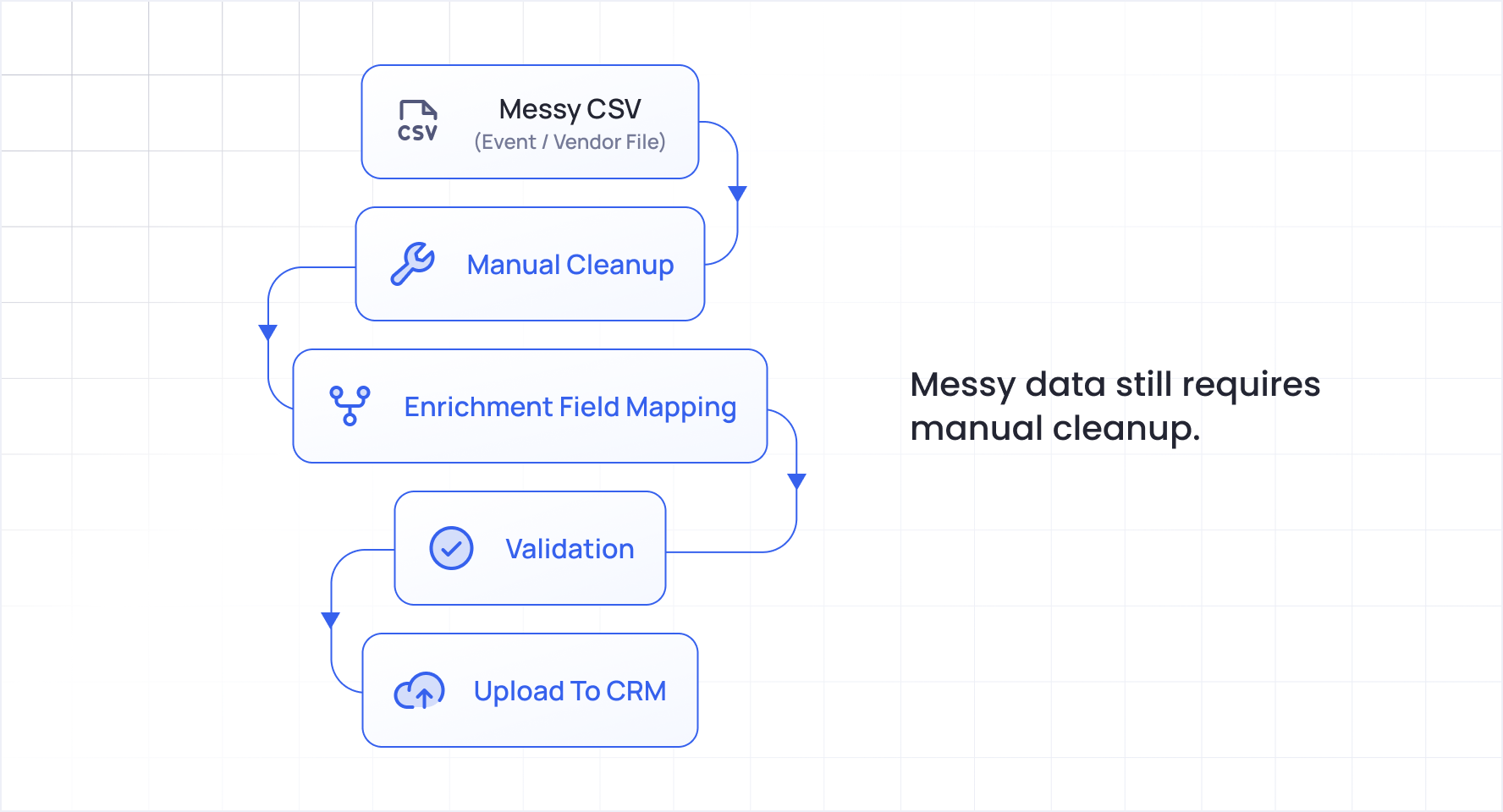

When asked about the most expensive recurring manual effort that still doesn't have a good tool, Grace doesn't hesitate: data processing.

Not analytics. Not reporting. The raw, unglamorous work of receiving a messy CSV from an event, a partner, a vendor (a file that's missing fields, has inconsistent formatting, contains duplicates) and being told to load it into the system.

"So many times in my career I've been handed this giant CSV from a game site or something," she says, "and it is disgusting and hideous and it's missing so many different data points. And they'll give it to ops and say, go ahead and load this in the system."

The gap isn't that tools don't exist to enrich or validate data. Clay can do enrichment. Various platforms can do deduplication. But the full pipeline: take a dirty file, validate it against your data model, clean the formatting, enrich what's missing, flag what can't be enriched, and upload it, still requires a human ops person stitching multiple tools together manually.

She compares it to a very smart Excel: the capability exists, but you need specialized knowledge to make it work, and for most one-off jobs, it's faster to just do it yourself than to build the automation.

AI Won't Take the Job. But It Will Change What the Job Is

The question that comes up in every conversation about AI and ops roles: will it eliminate the job?

Grace's answer is direct. It gives everyone heartburn, she says, but it doesn't scare her.

"AI becomes part of my job. It can't take my job. It can try, but it doesn't have that human solutioning. It only knows as much as it knows. It struggles to make things up. It's just referencing what it knows. Whereas humans, we do come up with different approaches all the time."

Her mental model is practical: most marketing ops professionals are working on about 25% of what they could be doing; the rest gets crowded out by reactive work and manual tasks. In an ideal future, agents handle that 25%, freeing the human to focus on the strategic 75%.

But the human doesn't disappear. They become the quality layer. The architect. The person who designs the system, validates the output, and catches the errors before they compound.

She draws from the manufacturing parallel again: when factories introduced machines to the plant floor, it eliminated some roles but created new ones. You still need mechanics. The machines don't just run themselves in a building all day.

The Hype and the Human Replacement by AI

Grace sees a troubling dynamic in how the industry is responding to AI. It's become polarizing. People either lean all the way in and use it daily (sometimes losing their ability to think independently) or they don't touch it at all and risk falling behind.

Both extremes are dangerous, she argues. Over-reliance erodes independent thinking. Complete avoidance means the learning curve compounds as the technology accelerates.

The hype she calls out most directly: the idea that AI will replace humans entirely. She traces it back to the fundraising pressure on AI companies. When you're already at a half-trillion dollar valuation, the only thing left to sell is a vision of total automation.

The reality, from inside the ops function, is far more mundane and far more interesting. AI is a powerful tool for rinse-and-repeat work. But when you need to iterate, evolve, and solve problems that haven't been solved before, it's not going to produce the final output like a person can.

What "More Is Not Best" Really Means

When asked for the one belief that consistently puts her at odds with stakeholders, Grace doesn't hesitate: more is not best.

Marketing teams measure success by MQL volume. Sales teams point to open pipeline. Grace measures differently. She cares about conversion, not count.

"I've seen a lot of sales teams say, look at all of my open pipeline," she explains. "But I like consequences and I like rules. If you haven't done any of these actions in a certain amount of time on an opportunity, it's not valid and it represents to me a false positive in the pipeline."

This extends to her views on pre-pipeline opportunities; the gray area where a lead has shown interest but hasn't become a real opportunity yet. She's fine measuring that activity, but she won't associate dollars with it. The moment you do, pipeline looks bloated, win/loss ratios get distorted, and the data stops being trustworthy.

It's the same instinct that drives her approach to AI: precision over volume, validation over speed, clean data over more data.

The Uncuttable Item: If You Rebuild Your Marketing Ops Stack in 30 Days, Start With a Thinking Partner

When pressed on what she'd buy first if rebuilding from scratch, Grace is torn between Clay and ChatGPT and ultimately leans toward the latter.

Not because ChatGPT is the best AI tool. She's aware of alternatives, mentions Anthropic and Claude. But what she values most right now is a system she can build an agent in that helps her think, a tool she can verbally release her thoughts to and have it produce output in her own voice.

"Content is so critical, communication is so critical, documentation is so critical," she says. "I need something that I can just release my thoughts to and it will spit it out in my own words."

It's a revealing priority. The most valuable AI tool for a marketing ops professional isn't the most powerful data platform or the most sophisticated automation engine. It's the one that acts as a thinking partner, helping bridge the gap between what's in your head and what needs to be on paper.

A Message to Ops Professionals

Grace closes with something that isn't about AI or technology at all. It's about the people who do this work.

"It's a very hard job that feels made up when you talk about it," she says. "You live between systems and people. It's exhausting. It can be emotionally draining trying to please people, especially where marketing and sales people tend to have very strong personalities because they need to be loud."

Ops people, she observes, tend to think of themselves as quieter. The wallflowers. And the hardest truth of the role is that if you're doing a good job, you won't hear about it. The only time anyone notices is when something breaks.

"Cherish the silent days," she says. "Cherish the days you don't hear about the errors. Nobody's going to say, 'I got through the day without a problem, and that's because of you.' But you did that."

Grace Harbison is Head of Marketing & MOps Advocacy at Paminga, a marketing automation platform built for the way marketing ops professionals actually work. She has spent over a decade in marketing and revenue operations at companies including CloudBees, Optimizely, SecurityPal, and CircleCI, leading martech overhauls, lifecycle frameworks, and lead scoring systems across Salesforce, Marketo, HubSpot, and more. She is based in Lake Jackson, Texas.

You can follow Grace's work and perspective on marketing ops on LinkedIn.